Recently, one of our readers asked us how to optimize the robots.txt file to improve SEO.

The robots.txt file tells search engines how to crawl your website, which makes it an incredibly powerful SEO tool.

In this article, we will show you some tips on how to create a perfect robots.txt file for SEO.

What Is a Robots.txt file?

Robots.txt is a text file that website owners can create to tell search engine bots how to crawl and index pages on their sites.

It is typically stored in the root directory (also known as the main folder) of your website. The basic format for a robots.txt file looks like this:

User-agent: [user-agent name]

Disallow: [URL string not to be crawled]

User-agent: [user-agent name]

Allow: [URL string to be crawled]

Sitemap: [URL of your XML Sitemap]

You can have multiple lines of instructions to allow or disallow specific URLs and add multiple sitemaps. If you do not disallow a URL, then search engine bots assume that they are allowed to crawl it.

Here is what a robots.txt example file can look like:

User-Agent: *

Allow: /wp-content/uploads/

Disallow: /wp-content/plugins/

Disallow: /wp-admin/

Sitemap: https://example.com/sitemap_index.xml

In the above robots.txt example, we have allowed search engines to crawl and index files in our WordPress uploads folder.

After that, we disallowed search bots from crawling and indexing plugins and WordPress admin folders.

Lastly, we have provided the URL of our XML sitemap.

Do You Need a Robots.txt File for Your WordPress Site?

If you don’t have a robots.txt file, then search engines will still crawl and index your website. However, you will not be able to tell them which pages or folders they should not crawl.

This won’t have much impact when you first start a blog and don’t have a lot of content.

However, as your website grows and you add more content, then you will likely want better control over how your website is crawled and indexed.

Here is why.

Search bots have a crawl quota for each website.

This means that they crawl a certain number of pages during a crawl session. If they use up their crawl budget before they finish crawling all pages on your site, then they will come back and resume crawling in the next session.

This can slow down your website indexing rate.

You can fix this by disallowing search bots from attempting to crawl unnecessary pages like your WordPress admin pages, plugin files, and themes folder.

By disallowing unnecessary pages, you save your crawl quota. This helps search engines crawl even more pages on your site and index them as quickly as possible.

Another good reason to use a robots.txt file is when you want to stop search engines from indexing a post or page on your website.

This is not the safest way to hide content from the general public, but it will help you prevent content from appearing in search results.

What Does an Ideal Robots.txt File Look Like?

Many popular blogs use a very simple robots.txt file. Their content may vary depending on the needs of the specific site:

User-agent: *

Disallow:

Sitemap: http://www.example.com/post-sitemap.xml

Sitemap: http://www.example.com/page-sitemap.xml

This robots.txt file allows all bots to index all content and provides them with a link to the website’s XML sitemaps.

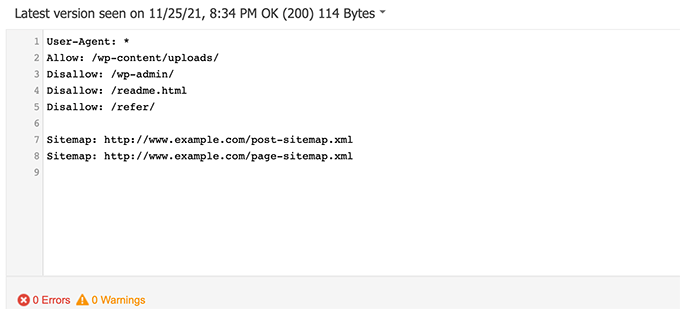

For WordPress sites, we recommend the following rules in the robots.txt file:

User-Agent: *

Allow: /wp-content/uploads/

Disallow: /wp-admin/

Disallow: /readme.html

Disallow: /refer/

Sitemap: http://www.example.com/post-sitemap.xml

Sitemap: http://www.example.com/page-sitemap.xml

This tells search bots to index all WordPress images and files. It disallows search bots from indexing the WordPress admin area, readme file, and cloaked affiliate links.

By adding sitemaps to the robots.txt file, you make it easy for Google bots to find all the pages on your site.

Now that you know what an ideal robots.txt file looks like, let’s take a look at how you can create a robots.txt file in WordPress.

How to Create a Robots.txt File in WordPress

There are two ways to create a robots.txt file in WordPress. You can choose the method that works best for you.

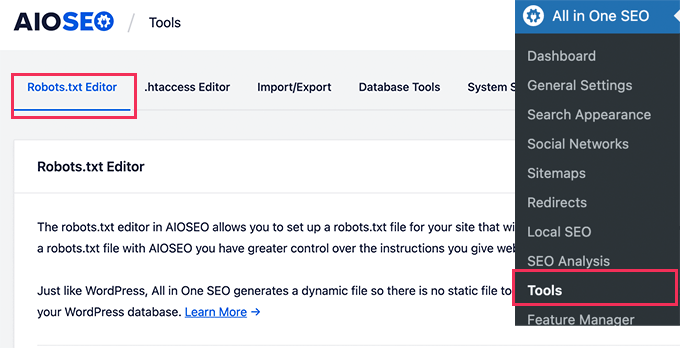

Method 1: Editing Robots.txt File Using All in One SEO

All in One SEO, also known as AIOSEO, is the best WordPress SEO plugin on the market, used by over 3 million websites. It’s easy to use and comes with a robots.txt file generator.

To learn more, see our detailed AIOSEO review.

If you don’t already have the AIOSEO plugin installed, you can see our step-by-step guide on how to install a WordPress plugin.

Note: A free version of AIOSEO is also available and has this feature.

Once the plugin is installed and activated, you can use it to create and edit your robots.txt file directly from your WordPress admin area.

Simply go to All in One SEO » Tools to edit your robots.txt file.

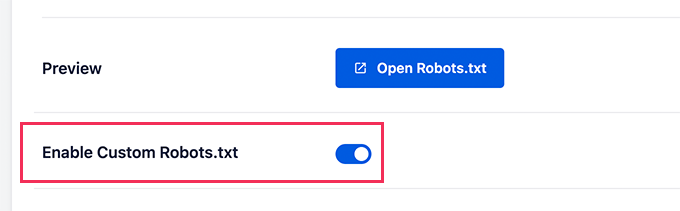

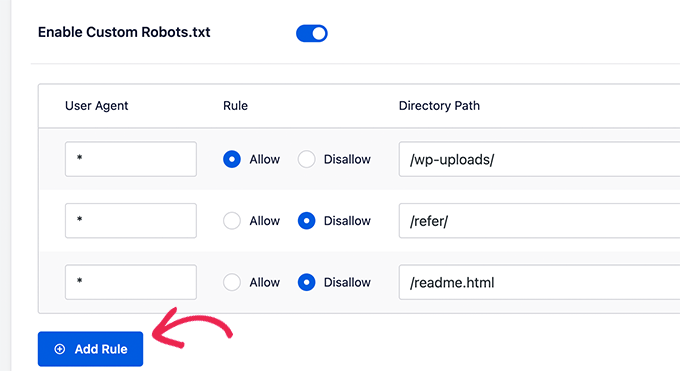

First, you’ll need to turn on the editing option by clicking the ‘Enable Custom Robots.txt’ toggle to blue.

With this toggle on, you can create a custom robots.txt file in WordPress.

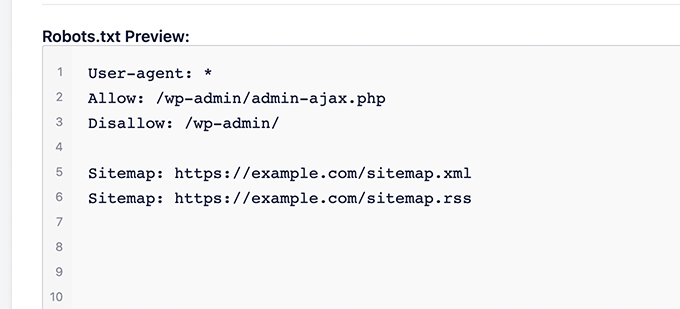

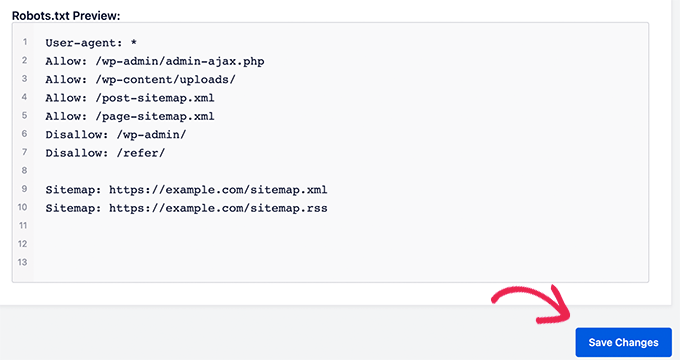

All in One SEO will show your existing robots.txt file in the ‘Robots.txt Preview’ section at the bottom of your screen.

This version will show the default rules that were added by WordPress.

These default rules tell the search engines not to crawl your core WordPress files, allow the bots to index all content, and provide them a link to your site’s XML sitemaps.

Now, you can add your own custom rules to improve your robots.txt for SEO.

To add a rule, enter a user agent in the ‘User Agent’ field. Using a * will apply the rule to all user agents.

Then, select whether you want to ‘Allow’ or ‘Disallow’ the search engines to crawl.

Next, enter the filename or directory path in the ‘Directory Path’ field.

The rule will automatically be applied to your robots.txt. To add another rule, just click the ‘Add Rule’ button.

We recommend adding rules until you create the ideal robots.txt format we shared above.

Your custom rules will look like this.

Once you are done, don’t forget to click on the ‘Save Changes’ button to store your changes.

Method 2: Editing Robots.txt File Using WPCode

WPCode is a powerful code snippets plugin that lets you add custom code to your website easily and safely.

It also includes a handy feature that lets you quickly edit the robots.txt file.

Note: There is also a WPCode Free Plugin, but it doesn’t include the file editor feature.

The first thing you need to do is install the WPCode plugin. For step-by-step instructions, see our beginner’s guide on how to install a WordPress plugin.

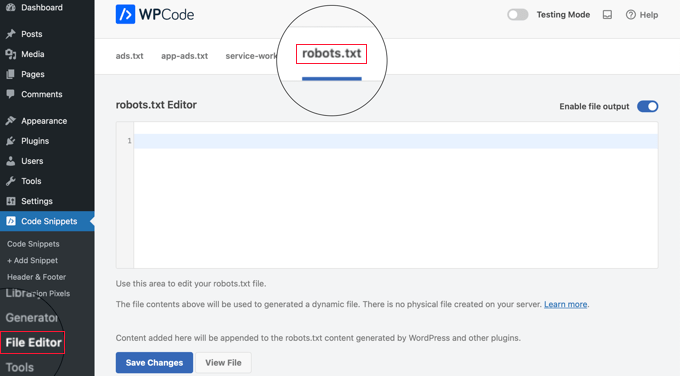

On activation, you need to navigate to the WPCode » File Editor page. Once there, simply click on the ‘robots.txt’ tab to edit the file.

Now, you can paste or type the contents of the robots.txt file.

Once you are finished, make sure you click the ‘Save Changes’ button at the bottom of the page to store the settings.

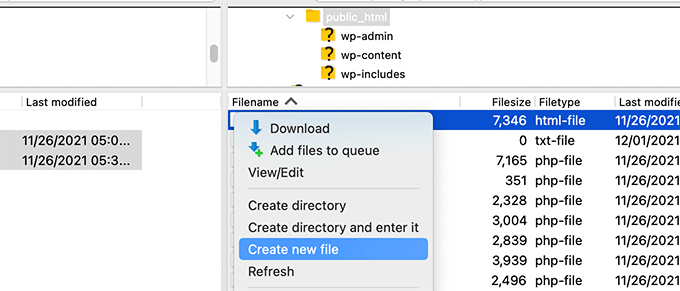

Method 3: Editing Robots.txt file Manually Using FTP

For this method, you will need to use an FTP client to edit the robots.txt file. Alternatively, you can use the file manager provided by your WordPress hosting.

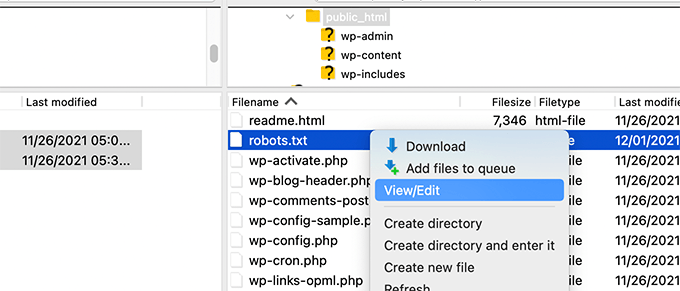

Simply connect to your WordPress website files using an FTP client.

Once inside, you will be able to see the robots.txt file in your website’s root folder.

If you don’t see one, then you likely don’t have a robots.txt file.

In that case, you can just go ahead and create one.

Robots.txt is a plain text file, which means you can download it to your computer and edit it using any plain text editor like Notepad or TextEdit.

After saving your changes, you can upload the robots.txt file back to your website’s root folder.

How to Test Your Robots.txt File

Once you have created your robots.txt file, it’s always a good idea to test it using a robots.txt tester tool.

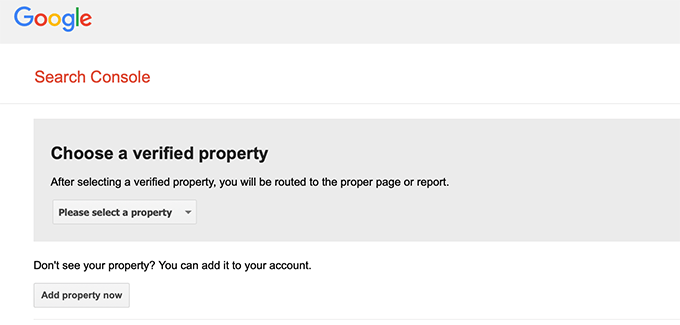

There are many robots.txt tester tools out there, but we recommend using the one inside Google Search Console.

First, you will need to have your website linked with Google Search Console. If you haven’t done this yet, see our guide on how to add your WordPress site to Google Search Console.

Then, you can use the Google Search Console Robots Testing Tool.

Simply select your property from the dropdown list.

The tool will automatically fetch your website’s robots.txt file and highlight the errors and warnings if it finds any.

Final Thoughts

The goal of optimizing your robots.txt file is to prevent search engines from crawling pages that are not publicly available. For example, pages in your wp-plugins folder or pages in your WordPress admin folder.

A common myth among SEO experts is that blocking WordPress categories, tags, and archive pages will improve the crawl rate and result in faster indexing and higher rankings.

This is not true. It’s also against Google’s webmaster guidelines.

We recommend that you follow the above robots.txt format to create a robots.txt file for your website.

Expert Guides on Using Robots.txt in WordPress

Now that you know how to optimize your robots.txt file, you may like to see some other articles related to using robots.txt in WordPress.

- Glossary: Robots.txt

- How to Hide a WordPress Page From Google

- How to Stop Search Engines from Crawling a WordPress Site

- How to Permanently Delete a WordPress Site From the Internet

- How to Easily Hide (Noindex) PDF Files in WordPress

- How to Fix “Googlebot cannot access CSS and JS files” Error in WordPress

- How to Setup All in One SEO for WordPress Correctly (Ultimate Guide)

We hope this article helped you learn how to optimize your WordPress robots.txt file for SEO. You may also want to see our ultimate WordPress SEO guide and our expert picks for the best WordPress SEO tools to grow your website.

If you liked this article, then please subscribe to our YouTube Channel for WordPress video tutorials. You can also find us on Twitter and Facebook.

Syed Balkhi says

Hey WPBeginner readers,

Did you know you can win exciting prizes by commenting on WPBeginner?

Every month, our top blog commenters will win HUGE rewards, including premium WordPress plugin licenses and cash prizes.

You can get more details about the contest from here.

Start sharing your thoughts below to stand a chance to win!

Steve says

Thanks for this – how does it work on a WP Multisite thou?

WPBeginner Support says

For a multisite, you would need to have a robots.txt file in the root folder of each site.

Admin

Pacifique Ndanyuzwe says

My wordpress site is new and my robot.txt by default is

user-agent: *

Disallow: /wp-admin/

Allow: /wp-admin/admin-ajax.php

I want google to crawl and index my content. Is that robot.txt okay?

WPBeginner Support says

You can certainly use that if you wanted

Admin

Ritesh Seth says

Great Airticle…

I was confused from so many days about Robots.txt file and Disallow links. Have copied the tags for robots file. Hope this will solve the issue of my Site

WPBeginner Support says

We hope our article will help as well

Admin

Kurt says

The files in the screenshots of your home folder are actually located under the public_html folder under my home folder.

I did not have a /refer folder under my public_html folder.

I did not have post or page xml files anywhere on my WP account.

I did include an entry in the robots.txt file I created to disallow crawling my sandbox site. I’m not sure that’s necessary since I’ve already selected the option in WP telling crawlers not to crawl my sandbox site, but I don’t think it will hurt to have the entry.

WPBeginner Support says

Some hosts do rename public_html to home which is why you see it there. You would want to ensure Yoast is active for the XML files to be available. The method in this article is an additional precaution to help with preventing crawling your site

Admin

Ahmed says

Great article

WPBeginner Support says

Thank you

Admin

ASHOK KUMAR JADON says

Hello, such a nice article you solve my problem. So Thank You so much

WPBeginner Support says

Glad our article could help

Admin

Elyn Ashton says

User-agent: *

Disallow: /wp-admin/

Allow: /wp-admin/admin-ajax.php <– This is my robot.txt code but im confuse why my /wp-admin is index? How to no index it?

WPBeginner Support says

If it was indexed previously you may need to give time for the search engine’s cache to clear

Admin

Ashish kumar says

This website really inspire me to start a blog .Thank you lost of tema.this website each and every article have rich of information and explanation.when i have some problem at first i visit this blog . Thank You

WPBeginner Support says

Glad our articles can be helpful

Admin

Anna says

I am trying to optimise robots for my website using Yoast. However Tools in Yoast does not have the option for ‘File Editor’.

There are just two options

(i) Import and Export

(ii) Bulk editor

May you please advise how this can be addressed. Could it be that I am on a free edition of Yoast?

WPBeginner Support says

The free version of Yoast still has the option, your installation may be disallowing file editing in which case you would likely need to use the FTP method.

Admin

Emmanuel Husseni says

I really find this article helpful because I really don’t know much on how robot.txt works but now I do.

pls what I don’t understand is how do I find the best format of robot.txt to use on my site (I mean one that works generally)?

I noticed lots of big blogs I check ranking high on search engine uses different robot.txt format..

I’ll be clad to see a reply from you or just anyone that can help

Editorial Staff says

Having a sitemap and allowing the areas that need to be allowed is the most important part. The disallow part will vary based on each site. We shared a sample in our blog post, and that should be good for most WordPress sites.

Admin

WPBeginner Support says

Hey Emmanuel,

Please see the section regarding the ideal robots.txt file. It depends on your own requirements. Most bloggers exclude WordPress admin and plugin folders from the crawl.

Admin

Emmanuel Husseni says

Thank you so much.

now I understand. I guess I’ll start with the general format for now.

jack says

Well written article, I recommend the users to do sitemap before creating and enabling their ROBOTS text it will help your site to crawl faster and indexed easily.

Jack

Connie S Owens says

I would like to stop the search engines from indexing my archives during their crawl.

Emmanuel Nonye says

Thanks alot this article it was really helpful

Cherisa says

I keep getting the error message below on google webmaster. I am basically stuck. A few things that were not clear to me on this tutorial is where do I find my site’s root files, how do you determine if you already have a “robots.txt” and how do you edit it?

WPBeginner Support says

Hi Cherisa,

Your site’s root folder is the one that contains folders like wp-admin, wp-includes, wp-content, etc. It also contains files like wp-config.php, wp-cron.php, wp-blogheader.php, etc.

If you cannot see a robots.txt file in this folder, then you don’t have one. You can go a head and create a new one.

Admin

Cherisa says

Thank you for your response. I have looked everywhere and can’t seem to locate these root files as you describe. Is there a path directory I can take that leads to this folder. Like it is under Settings, etc?

Devender says

I had a decent web traffic to my website. Suddenly dropped to zero in the month of May. Till now I have been facing the issue. Please help me to recover my website.

Haris Aslam says

Hello There Thank you For This Information, But I Have A Question

That I Just Create The Sitemap.xml and Robots.txt File, & Its Crawling well. But How Can I Create “Product-Sitemap.xml”

There is all list of product in sitemap.xml file. Do I Have To Create Product-sitemap.xml separately?

and submit to google or bing again ?

Can You please Help me out…

Thank You

Mahadi Hassan says

I have a problem on robots.txt file setting. Only one robots.txt is showing for all websites. Please help me to show separate robots.txt file of all websites. I have all separate robots.txt file of all individual website. But only one robots.txt file is showing in browser for all websites.

Debu Majumdar says

Please explain why did you include

Disallow: /refer/

in the beginner Robots.txt example? I do not understand the implications of this line. Is this important for the beginner? You have explained the other two Disallowed ones.

Thanks.

WPBeginner Support says

Hi Debu,

This example was from WPBeginner’s robots.txt file. At WPBeginner we use ThirstyAffiliates to manage affiliate links and cloak URLs. Those URLs have /refer/ in them, that’s why we block them in our robots.txt file.

Admin

Evaristo says

How can I put all tags/mydomain.Com in nofollow? In robots.txt to concentrate the link Juice? Thanks.

harsh kumar says

hey,,i am getting error in yoast seo regarding site map..once i click on fix it ,,,it’s coming again..my site html is not loading properly

Tom says

I’ve just been reviewing my Google Webmaster Tools account and using the Search Console, I’ve found the following:

Page partially loaded

Not all page resources could be loaded. This can affect how Google sees and understands your page. Fix availability problems for any resources that can affect how Google understands your page.

This is because all CSS stylesheets associated with Plugins are disallowed by the default robots.txt.

I understand good reasons why I shouldn’t just make this allowable, but what would be an alternative as I would suspect the Google algorithms are marking down the site for not seeing these.

Suren says

Hi,

Whenever, I search my site on the google this text appears below the link: “A description for this result is not available because of this site’s robots.txt”

How, can i solve this issue?

Regards

WPBeginner Support says

Hi Suren,

Seems like someone accidentally changed your site’s privacy settings. Go to Settings » Reading page and scroll down to ‘Search engine visibility’ section. Make sure that the box next to is unchecked.

Admin

Divyesh says

Hello

As i seen in webmaster tool, i got robot.txt file like below :

User-agent: *

Disallow: /wp-admin/

Allow: /wp-admin/admin-ajax.php

let me know is that okey ? or should i use any other ?

John Cester says

I want to know, does it a good idea to block (disallow) “/wp-content/plugins/” in robots.tx? Every time i remove a plugin it shows 404 error in some pages of that plugin.

Himanshu singh says

I loved this explanation. As a beginner I was very confused about robot.txt file and its uses. But now I know what is its purpose.

rahul says

in some robot.txt file index.php has been disallowed. Can you explain why ? is it a good practice.

Waleed Barakat says

Thanks for passing by this precious info.

Awais Ahmed says

Can you please tell me why this happening on webmaster tool:

Network unreachable: robots.txt unreachableWe were unable to crawl your Sitemap because we found a robots.txt file at the root of your site but were unable to download it. Please ensure that it is accessible or remove it completely.

robots.txt file exist but still

Dozza says

Interesting update from the Yoast team on this at

Quote: “The old best practices of having a robots.txt that blocks access to your wp-includes directory and your plugins directory are no longer valid.”

natveimaging says

Allow: /wp-content/uploads/

Shouldn’t this be?

Disallow: /wp-content/uploads/

Because you are aware that google will index all your uploads pages as public URLs right? And then you will get slapped with errors for the page itself. Is there something I am missing here?

nativeimaging says

Overall, its the actual pages that google crawls to generate image maps, NOT the uploads folders. Then you would have a problem of all the smaller image sizes, and other images that are for UI will also get indexed.

This seems to be the best option:

Disallow: /wp-content/uploads/

If i’m incorrect, please explain this to me so I can understand your angle here.

Jason says

My blog url not indexing do i need to change my robots.txt?

Im using this robots.txt

iyan says

how to create robot txt which is ONLY allow index for page and Post.. thanks

Simaran Singh says

I am not sure what’s the problem but my robots.txt has two versions.

One at http://www.example.com/robots.txt and second at example.com/robots.txt

Anybody, please help! Let me know what can be the possible cause and how to correct it?

WPBeginner Support says

Most likely, your web host allows your site to be accessed with both www and non-www urls. Try changing robots.txt using an FTP client. Then examine it from both URLs if you can see your changes on both URLs then this means its the same file.

Admin

Simaran Singh says

Thanks for the quick reply. I have already done that, but I am not able to see any change. Is there any other way to resolve it?

Martin conde says

Yoasts blogpost about this topic was right above yours in my search so of course I checked them both. They are contradicting each other a little bit.. For example yoast said that disallowing plugin directories and others, might hinder the Google crawlers when fetching your site since plugins may output css or js. Also mentioned (and from my own experience), yoast doesn’t add anything sitemap related to the robots.txt, rather generates it so that you can add it to your search console. Here is the link to his post, maybe you can re-check because it is very hard to choose whose word to take for it

MM Nauman says

As I’m Not Good in Creating this Robotstxt file So Can I use your Robots.txt file by changing the parameters like url and sitemap of my site is it good? or should I create a different one

Mohit Chauhan says

Hi,

Today i got this mail from Google “Googlebot cannot access CSS and JS files”…what can be the solution?

Thanks

Parmod says

Let me guess… You are using CDN services to import CSS and JS files.

or

It may be possible that you have written wrong syntax in these file.

Rahul says

I have a question about adding Sitemaps. How can I add Yahoo and Bing Sitemap to Robots file and WordPress Directory?

Gerbrand Petersen says

Thanks for the elaborate outline of using the robots file. Does anyone know if Yahoo is using this robots.txt too, and does it obey the rules mentioned in the file? I ask this since I have a “Disallow” for a certain page in my file, but I do receive traffic coming from Yahoo on that page. Nothing from Google, as it should be. Thanks in advance.

Erwin says

correction…

“If you are using Yoast’s WordPress SEO plugin or some other plugin to generate your XML sitemap, then your plugin will try to automatically add your sitemap related lines into robots.txt file.”

Not true. WordPress SEO doesn’t add the sitemap to the robots.txt

“I’ve always felt linking to your XML sitemap from your robots.txt is a bit nonsense. You should be adding them manually to your Google and Bing Webmaster Tools and make sure you look at their feedback about your XML sitemap. This is the reason our WordPress SEO plugin doesn’t add it to your robots.txt.”

https://yoast.com/wordpress-robots-txt-example/

Also more recommended is not to disallow the wp-plugins directory (reasons see Yoast’s post)

And personally I like to simply remover the readme.txt file…

hyma says

I understood it robots.txt file and use of robots file. What is the site map how do I create sitemap for my site.

Rick R. Duncan says

After reading Google’s documentation I’m under the impression that the directive to use in the robots.txt file is disallow which only tells the bots what they can and cannot crawl. It does not tell them what can and cannot be indexed. You need to use the noindex robots meta tag to have a page noindexed.

Nitin says

really good article for seo optimized robots.txt file. But I need you to give a tutorial on how to upload robots.txt file to server. As, being a beginner it seems to be a drastic problem to upload that file.

By the way thanks to share such beneficial information.

-Nitin

Parmod says

Upload it to your server/public_hmtl/(Your-site-name) … in this folder

Jenny says

What is the best way to add code to HTTacess to block multiple spam bot refers for their url and Ip address if no URL is given

I know if you get wrong syntax when doing httacess it can take your site off line I am a newbie and need to block these annoying multiple urls from Russia, China, Ukraine etc.

Many thanks

Hazel Andrews says

Thanks for those tips….robot txt files now amended! yay!

Rahat says

why have to add Allow: !!!

if I mention only which have to Disallow that enough. Don’t have to write code for Allow because Googlebot or Bingbot will crawl all other thing automatically.

So why should I use Allow again?

Connor Rickett says

Since lacking the Robots.txt file doesn’t stop the site from being crawled, I find myself curious: Is there any sort of hard data on exactly how much having the file will improve SEO performance?

I did a quick Google search, and didn’t see any sort of quantitative data on it. It’s about half a million articles saying, “Hey, this makes SEO better!” but I’d really like to know what we’re talking about here, even generally.

Is this a 5% boost? 50? 500?

WPBeginner Support says

Search engines don’t share such data. While not having a robots.txt file does not stop search engines from crawling or indexing a website. However, it is a recommended best practice.

Admin

Connor Rickett says

Thanks for taking the time to get back to me, I appreciate it!

JD Myers says

Good timing on this. I was trying to find this information just yesterday.

The reason I was searching for it is that Google Webmaster tools was telling me that they could not properly crawl my site because I was blocking various resources needed for the proper rendering of the page.

These resources included those found in /wp-content/plugins/

After I allowed this folder, the warning disappeared.

Any thoughts on this?

WPBeginner Support says

You can safely ignore those warnings. It is only a warning if you actually had content there that you would want to get indexed. Sometimes users have restricted search bots and have forgotten about it. These warnings come in handy in those situations.

Admin

Chetan jadhav says

I have a question that many people out there use static sitemap, and you know what they have wordpress site. Should we us static or sitemap genrated by worpress.

Wilton Calderon says

NIce, i like the way Wpbeginner have, and with that rank in alexa, look to me is one of the best way to sue robots.txt..

Brigitte Burke says

what does my robots.txt file mean if it looks like this?

User-agent: *

Disallow: /wp-admin/

Disallow: /wp-includes/

Disallow: /xmlrpc.php

Editorial Staff says

It’s just saying that search engines should not index your wp-admin folder, wp-includes folder, and the xml-rpc file. Sometimes disallowing /wp-includes/ can block certain scripts for search engines specially if your site is using those scripts. This can hurt your SEO.

The best thing to do is go to Google Webmaster Tools and fetch your website as a bot there. If everything loads fine, then you have nothing to worry about. If it says that scripts are blocked, then you may want to take out the wp-includes line.

Admin

hercules says

I see no logic in your idea about having a script within the includes directory that can be used by a crawn / robot .. and another, if there is an isolated case, it is better after specify the default wordpress allow this file you imagine that search engines use his scripts! after all, wordpress has certainly not by default an robots.txt anti serch engines!!!!