Imagine working hard to write a great story or article, only to find someone else claiming it as their own. That’s what happens when people steal your website content.

Content stealing, or ‘scraping’, is a big problem for website owners. These people are thieves who copy your work, use it on their own sites, and sometimes even pretend it is theirs. This can be really frustrating and unfair.

In this article, we will cover what blog content scraping is, how you can reduce and prevent content scraping, and even how to take advantage of content scrapers for your own benefit.

What Is Blog Content Scraping in WordPress?

Blog content scraping is when content is taken from numerous sources and republished on another site. Usually, this is done automatically via your blog’s RSS feed.

Unfortunately, it is very easy and very common to have your WordPress blog content stolen in this way. If it has happened to you, then you understand how stressful and frustrating it can be.

Sometimes, your content will be simply copied and pasted directly to another website, including your formatting, images, videos, and more.

Other times, your content will be reposted with attribution and a link back to your website, but without your permission. Although this can help your SEO, you may want to keep your original content hosted on your site only.

Why Do Content Scrapers Steal Content?

Some of our users have asked us why scrapers are stealing content. Usually, the main motivation for content theft is to profit from your hard work:

- Affiliate commission: Dishonest affiliate marketers may use your content to bring traffic to their site through search engines in order to promote their niche products.

- Lead Generation: Lawyers and realtors may pay someone to add content and gain authority in their community and not realize it is being scraped from other sources.

- Advertising Revenue: Blog owners may scrape content to create a hub of knowledge in a certain niche ‘for the good of the community’ and then plaster the site with ads.

Is It Possible to Completely Prevent Content Scraping?

In this article, we will show you some steps you can take to reduce and prevent content scraping. But unfortunately, there is no way to completely stop a determined thief.

That’s why we finished this article with a section on how you can take advantage of content scrapers. While you can’t always stop a thief, you may be able to gain some traffic and revenue through the content they have stolen from you.

What Should You Do When You Discover Someone Has Scraped Your Content?

Since it’s not possible to completely stop scrapers, you may one day discover that someone is using content they stole from your blog. You may wonder what to do when that happens.

Here are a few approaches that people take when dealing with content scrapers:

- Do Nothing: You can spend a lot of time fighting scrapers, so some popular bloggers decide to do nothing. Google already sees well-known sites as authorities, but that’s not true of smaller sites. So this approach is not always the best, in our opinion.

- Take Down: You can contact the scraper and ask them to take the content down. If they refuse, then you submit a takedown notice. You can learn how in our guide on how to easily find and remove stolen content in WordPress.

- Take Advantage: While we actively work at having content scraped from WPBeginner taken down, we also use a few techniques to get traffic and make money from scrapers. You can learn how in the ‘Take Advantage of Content Scrapers’ section below.

With that being said, let’s take a look at how to prevent blog scraping in WordPress. Since this is a comprehensive guide, we have included a table of contents for easier navigation:

1. Copyright or Trademark Your Blog’s Name and Logo

Trademark and copyright laws protect your intellectual property rights, brand, and business against many legal challenges. This includes plagiarism and illegal use of your copyrighted material or your brand’s name and logo.

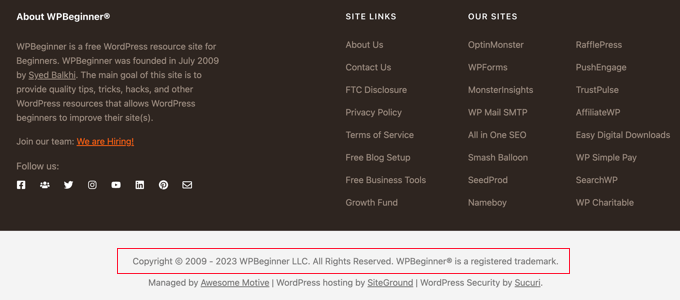

You should clearly display a copyright notice on your site. While your website content is automatically covered by copyright laws, displaying a notice will let you know that your content is copyrighted and that they cannot use your protected properties for business.

For example, you can add a copyright notice with a dynamic date to your WordPress footer. This will keep your copyright notice up to date.

This may discourage some users from stealing it. It will also help in the case that you do need to send a cease and desist letter or file a DCMA complaint to take down your stolen content.

You can also apply for copyright registration online. This process can be complicated, but luckily, there are low-cost legal services that can help small businesses and individuals.

Learn how in our guide on how to trademark and copyright your blog’s name and logo.

2. Make Your RSS Feed More Difficult to Scrape

Since blog content scraping is usually done automatically via your blog’s RSS feed, let’s look at a few helpful changes you can make to your feed.

Don’t Include the Full Post Content in Your WordPress RSS Feed

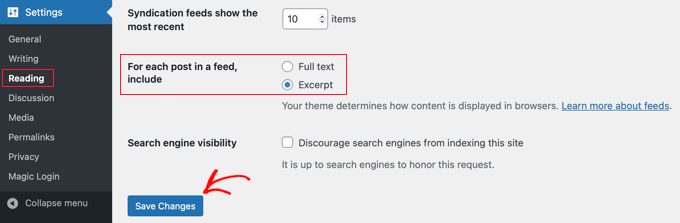

You can include just a summary of each post in your RSS feed instead of the full content. This includes an excerpt as well as post metadata such as the date, author, and category.

There is certainly debate in the blogging community about whether to have full RSS feeds or summary feeds. We won’t get into that now except to say that one of the pros of just having a summary is that it helps prevent content scraping.

You can change the settings by going to Settings » Reading in your WordPress admin panel. You need to select the ‘Excerpt’ option and then click the ‘Save Changes’ button.

Now, the RSS feed will only show an excerpt of your article. If someone is stealing your content through your RSS feed, then they will only get the summary, not the full post.

If you would like to tweak the summary, then you can see our guide on how to customize WordPress excerpts.

Optimize Your RSS Feed to Prevent Scraping

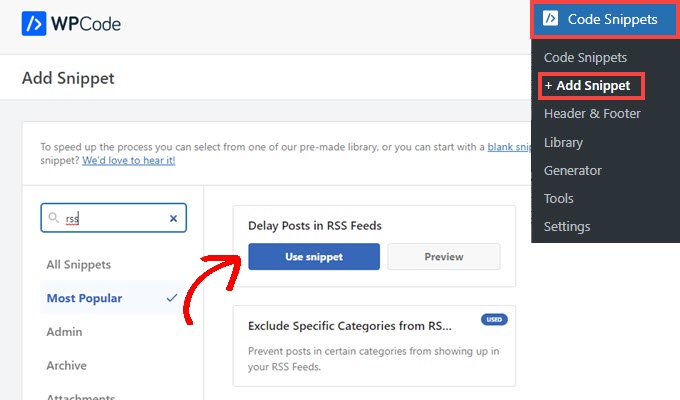

There are other ways you can optimize your WordPress RSS feed to protect your content, get more backlinks, increase your web traffic, and more. One of the best ways is to delay posts from appearing in the RSS feed.

The benefit is that when you delay posts from appearing in your RSS feed, you give the search engines time to crawl and index your content before it appears elsewhere, such as on scraper’s websites. The search engines will then see your site as the authority.

The safest and easiest way to do this is using WPCode because it has a recipe that automatically adds the correct custom code to WordPress.

For detailed instructions, see our guide on how to delay posts from appearing in your WordPress RSS feed.

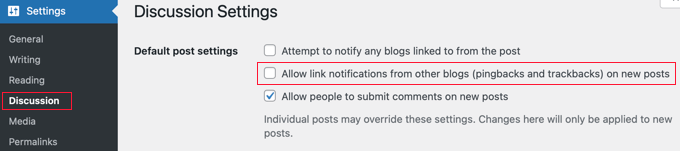

3. Disable Trackbacks, Pingbacks, and REST API

In the early days of blogging, trackbacks and pingbacks were introduced as a way for blogs to notify each other about links. When someone links to a post on your blog, their website will automatically send a ping to yours.

This pingback will then appear in your blog’s comment moderation queue with a link to their website. If you approve it, then they get a backlink and mention from your site.

This gives the spammer an incentive to scrape your site and send trackbacks. Luckily, you can disable trackbacks and pingbacks to give scrapers one less reason to steal your content.

For more information, check out our guide on disabling trackbacks on all future posts. You might also like to learn how to disable trackbacks and pings on existing WordPress posts.

Disable WordPress REST API

Aside from trackbacks and pingbacks, we also recommend disabling the WordPress REST API, as it can make it easier for spammers to scrape your content.

We have a detailed guide on how you can disable the WordPress REST API.

All you need to do is install and activate the free WPCode plugin and use their pre-made snippet to disable the REST API.

4. Block the Scraper’s Access to Your WordPress Website

One way to stop scrapers from stealing your content is to take away their access to your website. You can do this manually by blocking their IP address, but most users will find it easier to use a security plugin such as a web application firewall.

Block the Scraper Using a Security Plugin (Recommended)

Blocking scrapers manually is tricky and a lot of work. Especially since many hacking attempts and attacks are made using a wide range of random IP addresses from all over the world. It’s almost impossible to keep up with all those random IP addresses.

That’s why you need a Web Application Firewall (WAF) such as Wordfence or Securi. These act as a shield between your website and all incoming traffic by monitoring your website traffic and blocking common security threats before they reach your WordPress site.

For the WPBeginner website, we use Sucuri. It is a website security service that protects your website against such attacks using a website application firewall.

Basically, all your website traffic goes through the security service’s servers, where it is examined for suspicious activity. They automatically block suspicious IP addresses from reaching your website altogether. See how Sucuri helped us block 450,000 WordPress attacks in 3 months.

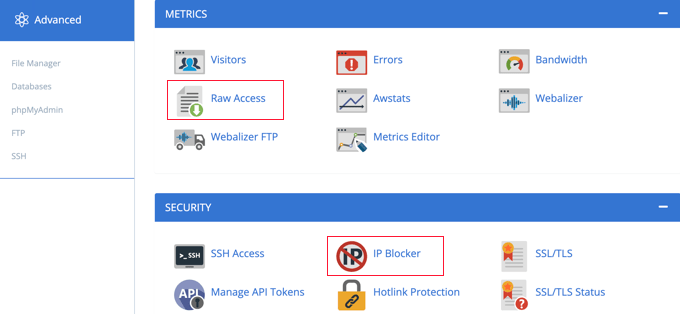

Manually Block or Redirect the Scraper’s IP Address

Advanced users may also wish to manually block a scraper’s IP address. This is more work, but you can specifically target the scraper’s address once you learn it. Web developer Jeff Star suggests this approach when he writes about how he handles content scrapers.

Note: Adding code to website files can be dangerous. Even a small mistake can cause major errors on your site. That’s why we only recommend this method for advanced users.

You can find the scraper’s IP address by visiting ‘Raw Access Logs’ in the cPanel dashboard of your web hosting account. You need to look for IP addresses with an unusually high number of requests and keep a record of them, say by copying them into a separate text file.

Tip: You need to make sure that you don’t end up blocking yourself, legitimate users, or search engines from accessing your website. Copy a suspicious-looking IP address and use online IP lookup tools to find out more about it.

Once you are confident that the IP address belongs to a scraper, you can block it using the cPanel ‘IP Blocker’ tool or by adding code like this in your root .htaccess file:

Deny from 123.456.789

Make sure you replace the IP address in the code with the one you want to block. You can block multiple IP addresses by entering them on the same line, separated by spaces.

For detailed instructions, see our guide on how to block IP addresses in WordPress.

Instead of simply blocking the scrapers, Jeff suggests you could send them dummy RSS feeds instead. You could create feeds full of Lorem Ipsum and annoying images or even send them right back to their own website, causing an infinite loop and crashing their server.

To redirect them to a dummy feed, you will need to add code like this to your .htaccess file:

RewriteCond %{REMOTE_ADDR} 123\.456\.789\.

RewriteRule .* http://dummyfeed.com/feed [R,L]

5. Prevent Image Theft in WordPress

It’s not just your written content that you need to protect. You should also prevent image theft in WordPress.

Like text, there is no way to completely stop people from stealing your images, but there are plenty of ways to discourage image theft on a WordPress website.

For example, you can disable the hotlinking of your WordPress images. This will mean that if someone scrapes your HTML content, their images will not load on their site.

It will also reduce your server load and bandwidth usage, boosting your WordPress speed and performance.

Alternatively, you can add a watermark to your images that gives you credit. This will make it clear that the scraper has stolen your content.

You can learn these two techniques, as well as other ways to protect your images, in our guide on ways to prevent image theft in WordPress.

6. Discourage Manual Copying of Your Content

While most scrapers use automatic tools, some content thieves may try to manually copy all or part of your content.

One way to make this more difficult is to prevent them from copying and pasting your text. You can do this by making it harder for them to select the text on your website.

To learn how to stop manual copying of your content, see our step-by-step guide on how to prevent text selection and copy/paste in WordPress.

However, this will not completely protect your content. Remember, tech-savvy users can still view the source code or use the Inspect tool to copy anything they want. Also, this method will not work with all web browsers.

Also, keep in mind that not everyone copying your text will be a content thief. For instance, some people may want to copy the title to share your post on social media.

That’s why we recommend you only use this method if you feel it’s truly needed for your site.

7. Take Advantage of Content Scrapers

As your blog gets larger, it is almost impossible to stop or keep track of all content scrapers. We still send out DMCA complaints. However, we know that there are tons of other sites that are stealing our content that we just cannot keep up with.

Instead, our approach is to try to take advantage of content scapers. It’s not so bad when you see that you’re making money from your stolen content or receiving a lot of traffic from a scraper’s website.

Make Internal Linking a Habit to Gain Traffic and Backlinks from Scrapers

In our ultimate guide on SEO, we recommend that you make internal linking a habit. By placing links to your other content in your blog posts, you can increase pageviews and reduce the bounce rate on your own site.

But there is a second benefit when it comes to scraping. Internal links will get you valuable backlinks from the people who are stealing your content. Search engines like Google use backlinks as a ranking signal, so the additional backlinks are good for your SEO.

Lastly, these internal links allow you to steal the scraper’s audience. Talented bloggers place links on interesting keywords, making it tempting for users to click. Visitors to the scraper’s website will also click the links, which will lead them straight back to your own website.

Auto Link Keywords With Affiliate Links to Make Money from Scrapers

If you make money on your website from affiliate marketing, then we recommend enabling auto-linking in your RSS feeds. This will help you maximize your earnings from readers who only read your website via RSS readers.

Even better, it will help you make money from the sites that are stealing your content.

Simply use a WordPress plugin like ThirstyAffiliates that will automatically replace assigned keywords with affiliate links. We show you how in our guide on how to automatically link keywords with affiliate links in WordPress.

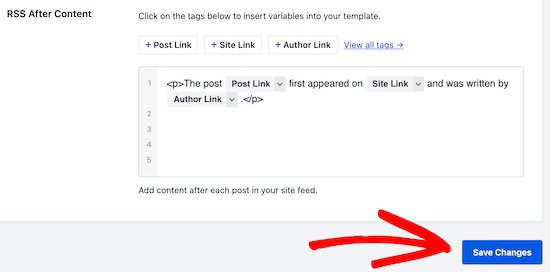

Promote Your Website in Your RSS Footer

You can use the All in One SEO plugin to add custom items to your RSS footer.

For example, you can add a banner that promotes your own products, services, or content.

The best part is that those banners will appear on the scraper’s website as well.

In our case, we always add a little disclaimer at the bottom of posts in our RSS feeds. By doing this, we get a backlink to the original article from the scraper’s site.

This lets Google and other search engines know we are the authority. It also lets their users know that the site is stealing our content.

For more tips, check out our guide on how to control your RSS feed footer in WordPress.

We hope this tutorial helped you learn how to prevent blog content scraping in WordPress. You may also want to see our ultimate WordPress security guide or our expert pick of the best content protection plugins for WordPress.

If you liked this article, then please subscribe to our YouTube Channel for WordPress video tutorials. You can also find us on Twitter and Facebook.

Dennis Muthomi

OK Wow, this is an incredibly comprehensive guide on preventing blog content scraping! Thank you, WPBeginner, for shedding light on this frustrating issue.

I especially liked the section on making the RSS feed more difficult to scrape – I hadn’t considered that before.

The tip about delaying posts from appearing in the RSS feed is brilliant and something I’ll definitely implement on my own blog RIGHT AWAY!

Moinuddin Waheed

I have many friends who used to talk to me about using RSS feed and make content on their website this way. I was not aware exactly how it worked and what benefits they incurred by doing that.

Scraping others content and showing as if they are themselves have created is an offense but in unethical world who cares. Thanks for making this guide by following which we can prevent our content from scraping and atleast can turn it to our advantage.

Jiří Vaněk

Thank you for the article. I have a blog with over 1200 articles, and I need to start addressing that as well. Thanks for the valuable advice.

WPBeginner Support

You’re welcome!

Admin

Toheeb Temitope

Thanks for the post.

But can I even remove the or disable RSS feed totally or is there any special benefit in it.

Then if I want to disable RSS feed totally, how will I do it.

Thanks.

WPBeginner Support

If you want to disable the RSS feed for your site, our guide below would be helpful:

https://www.wpbeginner.com/wp-tutorials/how-to-disable-rss-feeds-in-wordpress/

RSS feeds can be helpful to certain users of your site who use RSS feed readers to know when a site has new content.

Admin

Moinuddin Waheed

it is good idea to know that we can even disable the RSS feed thus by preventing the potential theft and scraping of the content.

though disabling the RSS feed has some trade off as well.

is there any seo disadvantage of disabling the RSS feed?

or it has nothing to do with seo and ranking ?

WPBeginner Support

Your RSS feed should not affect your site’s SEO.

Giovanni

Thank you. Exactly the information I need. But do scrapers use RSS feed still in 2019?

WPBeginner Support

They certainly can and will try to

Admin

Nergis

We hear so much about getting site content by doing content curation. Is content scrapping the same as content curation? If not what’s the difference between the two?

WPBeginner Support

Content scraping is taking content from other sites to place on your site without permission, content curation is normally linking to other content within content you have created

Admin

Kingsley Felix

I am facing these issues, i had 20+ for one of our brands, then we moved elsewhere and they are back again.

WPBeginner Support

content scrapers are a constant strugle sadly

Admin

slevin smith

I found a realy bad content scaper from by blog, not only they steal my content, used the same name for they spam blog only separatedwith a – and all description, tag, basicly trying to be me, is used links in rssfeed with my blog, youtube channel, facebook, twitter, pinterest & google plus, which shows up on there spam blog, also found that png images shows up on the front page but jpeg dose not, but that maybe just on blogger.

astrid maria boshuisen

I absolutely love the interlinking-idea. Will have to look at the RSS suggestion, since I forgot how that works exactly, having focussed on writing Kindle e-books for a while (talk about content scraping – zero protection there!.. hence my return to website writing) but I feel I have really got a place to start with protecting my content! Thanks!

Danni Phillips

WOW! So much to take into consideration when starting a blog. My blog is only 2 weeks old. I have used mainly WP Beginner to set up my blog. So much good info set out in a way a newbie can follow.

I don’t know if this works for content scraping but I have installed a plugin called Copyright Proof. It disables right click so that people can not copy and paste your content.

I decided to use this plugin as it was a recommended plugin for author sites.

Eri

your post can be copied easy , trust me.

Reo

Disabling selection is good method but it only support famous web browser like Chrome, Safari and Opera but not IE and Edge.

Dave Coldwell

Another great article, I work as a freelance journalist so I sell a lot of articles and it’s up to the people who buy it to decide on their policies.

But I also have a couple of blogs and affiliate websites so I think I might need to take a look at what’s happening with my content.

Absynth

Does not giving credit where it’s due count as “content scraping”?

Because Jeff Starr wrote this same post at Perishable Press over 5 years ago:

Check the structure and terminology of your article and compare it to the original.

Just sayin.

WPBeginner Support

We did give credit to Jeff Starr. Please read the actual article before pointing out errors.

Admin

Absynth

Yes my apologies.. I missed that the first time through. My bad

Sieu

i has just develop a theme for blogger and that theme need a full feed to work, i worry about scrapping content, i think if many scrapper use my content on their blogger site, which have the same content with my site, backlink point to mysite, my blog will be spam in Google ‘s eye and will be deleted.

Lori

Thanks for this amazing article with useful tips! I actually just got a “Thin Content” penalty from Google. I asked an SEO expert for help, they told me to stop scraping content. They sent me a link of an article I wrote yesterday and thought I had stolen it from another website. The crappy thing is, they were stealing from me, not just that article, but probably a couple thousand articles! They are still in Google search, and I am not. I am being the one penalized! Turns out there are at least three websites scraping my content, not even sure what to do.

Raviraj

Awesome article.

I sort of agree with most of the points you have discussed. Actually few of the points are pretty awesome.

But if your sole business is based on content in your website, shouldn’t we be more careful about scrapers?

I don’t think content theft would ever be good to the owner of the content.

I guess we all should think of opting some preventive measure rather than reactive measure. You can consider using ShieldSquare, a content protection solution to stop content scraping permanently.

Andre

I know this is an old article, but the one source that is NOTORIOUS for allowing content scaping is WordPress with their “Press This” feature. They are basically encouraging this.

Sara

I think I may have finally found the answer to my problem. I have been thinking someone has been stealing my stories and making them into “new” stories. I thought either someone is out to get me or I am losing my mind. I was almost losing my mind over thinking like this. Paranoid. Concerned someone was listening to my private phone calls. When really, all the information has come directly from my blog! This article may have saved my life. Literally. I am not even joking because I have been so afraid that I was going crazy and very selectively trying to talk about it with friends, to get feedback or support and being looked at like I am nuts and need to go to the psych ward for a while. This article makes what has been happening to me, make total sense. Thank you! I am so overwhelmed with relief.

John

Thanks for some tips but a good chunk of this article is not very helpful. Most scrappers are not blind scrappers, the content is generally sucked, looked at by a human eye and then published. Which means that even by taking a minute to look at an article the spam kid is able to publish hundred of copied article a day. Backlinks problem is very easy to circumvent for content scrapper as the feed importers have pre-process options and they generally set it to delink the body. Also I do not see how turning rss into summary may help at all, the feed importers only use the rss to grab the new content link and from there they follow the skeleton of your html, which you have nicely set with proper image, title, link etc tags for the convenience of Google and very easily extract the content.

Obviously blocking the IP is a very good solution. DMCAs are generally a waste of time; they take time to formulate and stupid hosts take time to respond (since spammers choose these host specifically because they’re lax on spam-like activity). Of all, Google is the most frustrating; no matter how many reports you file with them they never take action on any of the stolen content on which they’re showing ads and still rank the crap-spam site well on the search results despite it being easy for their systems to detect copies

Evie

John, I couldn’t agree with you more. Google got mad at me stating that I was the person stealing my own content. This person stole my content and put it on blogger. The nerve. There needs to be a solution for this. At this point, I just block!

WPBeginner Staff

Then perhaps the best way for you is to change the licensing and aggressively send take down notices to content scrappers. Meanwhile keep focusing on creating quality content.

Philipp D

Hi there,

I just stumbled upon your article while looking for answers to some of my concerns.

I, together with some friends, launched a website about DIY in Italy, few months ago, which is working unexpectedly well, rankings are high, lots of traffic, etc. Still, PR is yet 0. Our content has a Creative Commons 4.0 license, because we realyl believe it’s a good way to share contents. HOWEVER:

Some time ago we noticed a PR4 site with lots of traffic copying our top articles, linking back to our homepage (which is not what you’re supposed to do with a CC license, but it’s still ok). The problems are these:

1. there’s a whole lot of smaller sites scraping their (our) content and linking back to them instead of our site

2. the PR4 site and some of the smaller sites somehow rank better than our site

3. there’s strong suggestions that a Google penalty to OUR content has taken place, as it has lower PR than most of the other pages (which have been online for a long time).

We’re in contact with the PR4 site and it’s ok for us if they use our content, as long as they link back to the original article (that’s the whole point of the CC license), BUT we’re trying to find a solution to avoid getting Google penalties: would rel canonical do the job? What is your opinion? Whould we change our license and be more aggressive towards content copying?

Thank you!

WPBeginner Support

Philipp, If you have not already done so, then you should create a webmaster tools account for your site and submit your sitemap. It helps you figure out if there is a problem with your site, how your site is doing on search, and you can use lots of other tools. It also helps Google better understand where some content first appeared.

We don’t think changing the license will stop content scrappers from copying your content.

Admin

Philipp

hi! Yes, we set up a webmaster tools account, linked the site to our google+ page, and most of the authors to their google+ profiles using publisher and author tags. authorship seems to be working fine in search snippets, but so far it doesn’t seem to make much difference in case of scraped content. Higher PR pages scraping our content are still on top…

Garratt

One of the best ways not to be effected by this is to ping effectively. Pinging, and manually submitting pages to Google and Bing gets spiders on your site FAST. They index the pages ASAP, then when they find duplicate content on other sites consider you as the authority.

I do however have the sneaky suspicion this might have to do with PageRank though… But Matt Cutts (webspam team @ Google) has advocated using pinger’s on this very topic. I’m just not sure how much I can trust what he says though.

To add more services, go to Settings -> Writing Settings -> Update Services -> Open the “Update services” link in a new tab and copy all the update services. Back in WordPress paste them in the ping list and click save.

Open account in Bing Webmaster tools for manual URL submission for fast indexing.

Chris Backe

I recently discovered a guy that can taking an RSS feed from my blog – bear in mind that my blog is a summary feed with Yoast’s ‘This post was found first on’ line. I sent the guy a thank-you message, basically telling him that he’s giving me backlinks, AND telling Google he’s copying my website (since they can look at the timestamps to see which was published first).

Checked out 2 days later, and all my stuff was mysteriously gone…

Editorial Staff

Hah yup. Most of these scammers aren’t very bright lol. Glad you got it fixed.

-Syed

Admin

Ian

Has anyone seen or used this WP anti scraping plugin http://wordpress.org/plugins/wordpress-data-guards/ it sounds solid but very few people have downloaded it ? I’m not technical – so would appreciate opinions on its worth or effect on SEO

Editorial Staff

You can definitely use that plugin. It blocks right clicks, keyboard shortcuts for copying, ip blacklist etc. Those all prevent manual scraping however most content scrapers use automatic tools. So none of those would be super helpful.

Admin

Ian

Thanks for your reply – the pro version states it protects you from bot attacks so I assume that means scrapper bots? the price puts me off installing it on all my sites, but I may use it on one just to see how well it works

Mark Conger

This is one of, if not the best, “beginner” article I’ve ever come across on the web.

After reading it I feel like I just had a meeting with a security consultant.

I’m applying these techniques right frickin now!

Thanks. I’m now a follower of this site.

Editorial Staff

Thanks for the very kind words Mark

Admin

Neil Ferree

Its only happened to me a few times. Some blogger from outside the USA has taken my post word-for-word and posted to their site as if it were their own. Since it was just a single post with my YT video embedded, I didn’t sweat the details too much, since my channel CTR saw a nice spike it visits anyway.

Edward B. Rockower, Ph.D.

Just want to say thanks, thanks, and thanks!

I just today discovered your website, only read 3 articles so far (including this one)… but I’m extremely impressed.

I’ve only been blogging now for 5 weeks, but finding it addictive, especially seeing the growing traffic and user engagement as a result of my efforts. Seeing 100 visitors to my blog site in one day, and being able to see who’s referring them, motivates me to learn all I can to increase the social media marketing and interactions with new visitors.

Best regards,

@earthlingEd

Debbie Gilbert

I love your Website and was floored to read about content scraping! Is there and way to create a watermark somehow which is not distracting to your readers but to the scraper’s site is dead obvious?

Editorial Staff

You can do hotlink protection among other things to disable images on domains that are not whitelisted.

Admin

Usman

Is it legal to post the complete article from another website and writing source website name at bottom of article?

Editorial Staff

No.

Admin

Usman

And if we give direct link to article at bottom?

Dan

It is still not good unless the owner approves it

Abdul Karim

Is there any way / plugin

someone is copy my fashion blog picture and post it at their forum

but when i click on image at that forum . its open in new window

i want any plugin or script that if he copy my images when someone click on that images, then that person redirect to my blog post related to that images ?

any plugin yet ? link with post images ?

Editorial Staff

None that we know of.

Admin

Abdul Karim

I’ll done it just change

when someone upload any picture on right side it shows url link

default setting is media file

u have to change it in attachment url

then done!

when someone copy your blog images .that give backlink to your posted page

Anton

If someone takes an article written in English and translate it, using their heads and not google translate, into some other language, lets say because the majority of the people in the country of that other language doesn’t understand English. Would you point them out as scrapers anyway? Or what is your opinion on that?

For me personally I don’t find it extremely problematic, of course I believe the “author” should link bank to the original article while clarifying that his article is translated.

Editorial Staff

Unless you have written permission of the author, then it is technically scraping.

Admin

Greg

This is a tremendous article. After reading it I hope you do not see me as a content scraper. I have used excepts from you (curated), I always have the ‘Read the Full Article” and have your page link there and also many of my posts are tweeted and I include your twitter account in there. If you do not want this please let me know and I will gladly remove it. I am very appreciative of your work and want to share it with my visitors. it is not intended to steal your visitors but to be able to give good value to mine and send them on to you for more.

Editorial Staff

Greg, as long as you only display an excerpt and send the user over to our site to read the full article, then it is not scraping. As you said, it is curation. Tons of popular sites do that (i.e reddit, digg, etc).

Admin

ryan

My site has a lot of original security articles and a couple have been scraped. The site that scraped me was in yahoo! News with my article and had people commenting on it. I dealt with the issue by commenting and saying I was the original author and replied to a few comments. I had internal links, that’s how I found out so quickly. A trick I am going to write about is getting people who come from a scrapers site and have a banner or image appear telling them what happened. The never ending request suggestion sounds illegal under the computer fraud and abuse act. I am not a lawyer. I just write about security, so I have to know the security laws for computers.

I Do not like it that your form didn’t take my companies email as a valid email.

Editorial Staff

Sorry Ryan that our form didn’t approve your business email. Not sure what happened there, but it is meant to approve all valid emails.

Admin

andre

how to use this code, can you provide more details or tutorials, thank you

RewriteCond %{REMOTE_ADDR} 123\.456\.789\.

RewriteRule .* http://dummyfeed.com/feed [R,L]

Editorial Staff

You would have to edit the .htaccess file.

Admin

Ali Rashid

nice and informative writeup i like your approach of taking advantage of the scrappers however blocking an ip may not always work; a serious scrapper would often use a list of anonymous or free proxies in that case blacklisting one ip might not be an effective solution as the scrapper would change it often. One solution is to write a small script that will detect any abnormal traffic from a given ip, say more than 20 hits/sec and challenge it with a captcha if no reply, put the ip in a temp blacklist for about 30 mins. you can hardened it with another javascript that detects mouse, touch or keyboard movement after few hits, if no keyboard, mouse, or touch is detected you can again put the scrapper in the temp blacklist, worked like a charm for us.

Arihant

Your solutions are good enough for content scrapers.

But what if people are manually coping and pasting content into their Facebook pages.

We have implemented tynt but they remove the link back to original article, any ideas on how you can handle this kind of situation.

Editorial Staff

If people really want to steal your content, there is nothing you can do about it. It’s a sad truth, but it’s a truth.

Admin

Garratt

Actually there’s a plugin created by IMWealth Builders, probably the only one of their plugins I like, the rest are pretty trashy and involve scraping Ecommerce sites (CB,Azon,CJ etc) for affiliate commisions.

It’s called “Covert Copy Traffic” is actually allows you to set any text pre or post a set number of words. So say I set it to post “This content was taken from xxxxxxx.com” after 18 words. Then anytime someone copied/paste more than 18 words from the website it would add that text at the bottom, 17 words or less it would do nothing.

These were just example settings. Pretty useful plugin, works a charm. I’ve tried just about every way I could think of to bypass the text insertion but it seems to be impossible. Plugin is to stronk.

Editorial Staff

Sounds like you are describing this tutorial here:

https://www.wpbeginner.com/wp-tutorials/how-to-add-a-read-more-link-to-copied-text-in-wordpress/

Garratt

Yeah, that’s right. You can just use that script to say “Content came from yourwebsite.com” rather than “Read More”.

Jennae Barker

Is this true that their amazon etc programs are scrapers – if that is the case – I have made whopper of mistake on a purchase from them – luckily, I have not used it yet.

Garratt

Yeah Jennae, it’s legal in terms of Amazon allow you to copy content from their pages. It helps there sales, affiliates are the reason Amazon is Amazon.

However Google and other search engines (that matter) just consider it a “thin affiliate site” as in no original content. Therefore they don’t rank unless there’s a certain percentage of original content on the site as well.

A scraper, is nothing more than a spider/crawler generally it runs in socket mode, however some run in browser.

Just because it’s labeled as a scraper doesn’t make it bad per say, I use scrapers and spiders regularly to check my site for unnatural links, I check others for competition analysis, and keyword research and a variety of other tasks that do not harm anyone, but benefit me.

However I don’t like or condone anyone scraping for the purpose of copyright infringement. Which is what this discussion is really about.

Google uses the spider “Google Bot” to index the web along with 100’s of other search engines, there’s thousands, hundreds of thousands of spiders crawling the web for a variety of purposes. Google also scrapes websites to “cache” them. As do a lot of important services we need such as the historical web archives.

Troy

I’m about to begin aggressively searching for sites that are copying my content and have the content removed. I no it is impacting how my site ranks so I have to do something about it. Any idea how much has to be copied before you can deliver DMCA notices? Is a paragraph in an article enough to legally be able to call it plagiarized?

Editorial Staff

We are not legal experts here, so we refrain from giving legal advice on this site.

Admin

Dallas

You fail to mention that any self respecting autoblogger will strip out links and insert their own affiliate links rather than using your content as it comes, so your approach to getting links from them will usually fail.

Editorial Staff

Is there such thing as a self respecting autoblogger? If they have any self respect, then they will write original content.

Admin

David Halver

Agreed! There’s a very special “Hot Place” near the center of the Earth for Spammers, Scrapers and Auto-Bloggers…

VeryCreative

I think that the best idea is to include affiliate links.

After the last Pinguin update, my website was penalized. I started to analyze it and I’ve discovered that many other sites copied my content. I don’t know why, but those websites rank better than me in search engines, using my content.

Editorial Staff

Not just affiliate links. Include as many internal links. Because if those sites are linking back to your other pages, then Google will KNOW that you are the authority site.

Admin

Bayer

Hi wpbeginner.com Team. I really appreciate this article, but have one question in regards to having internal links in your pages/posts.

I suppose you mean ‘absolute’ links?? Otherwise this may not work in your favour, once the content has been scraped… Well, so far I have always been going along with relative links, as you do I suppose. Which is the best method? Cheers!

Editorial Staff

We always use absolute links because it keeps thing working smooth.

Gautam Doddamani

first of all your tutorial is just fantastic..hats off! just one doubt how to know if a site is a scraper site? i used your method and found out that Google Webmaster Tools is reporting that there are 262 links to my site and there are many sites which dont know of…thus i am in a confusion….how to check if a site is a scraper site or an authoritative site?? is der a tool available for that? thanks in advance!

Editorial Staff

Trust me, no authority site will ever STEAL your article word-for-word.

Admin

Gautam Doddamani

yes that is true…but what if i dont want to find my article on those scraping sites…i know my article is there as it is being reported by GWT and i just want to block that IP address by inserting those rewritecond rules in the htaccess file…i dont want to waste my time searching those bad sites for my article or requesting them to takedown my article…

Nathan

Thank you for this article – and for your site in general!. I like this so much that I had wondered how I would keep track of this resource. And now I see the subscriptions options below. What a way to get a comment!

Yeasin

Preventing content scraping is almost impossible. I don’t think content scrapper does hurt me any way. They are just voting me that i have got some high quality contents. Google is smart enough to detect the original publishers. No-one should worry.

mrwindowsx

really informative, if you use cloudflare, there is new apps called ScrapeShield, and you can easily protect and track/monitor your site contents free.

wpbeginner

@mrwindowsx Oh didn’t know that. Thanks for pointing it out.

Gautam Doddamani

wow dats great man…do you use cloudflare? i just wanted your review because i have never used that cdn service..i know it is free and all but i think my site load time is already gr8 that i didnt require it…now that scrapeshield is there i think i will definitely check it out…what all other apps will we get if we start using cloudflare?? thanks

Matt

Hello,

IMO @cloudflare really is awesome. I have two sites on it (both mine and my wife’s blog) and it really is incredibly fast, but that’s not to mention all of the security, traffic analysis, app support (automatic app installs) that they provide.

I know that all hosting setups are different, but I have both of our sites running on the Media Temple (gs)Grid Service. I can honestly say that our sites run faster now than they did when I was using W3 Total Cache and Amazon S3 as my CDN. Actually, I still use W3TC on my site to minimize & cache my content, but I use CloudFlare for CDN, DNS, and security services.

Highly recommend… Actually, I would really appreciate it if someone at WPBeginner would give us their in-depth, experienced opinion of the CloudFlare services. To me, they have been awesome!

shivabeach

You can also get a plugin whose name eludes me at this time that does the google search for you. It also adds a code into your RSS that the app searches for

MuhammadWaqas

Great post, I know there are many autoblogs fetching my content. Although after penguin update my site is getting 3 times more traffic from google than before. But after reading about many disasters or original content generators I’m worried about future penalties by google.

Its my experience that usually google respect high PR sites with good authority backlinks. but site is just one year old and PR is less than 5.

I try to contact scrappers but most of them don’t have contact forms. so I think I’ll try that htaccess method to blog the scrappers ip addresses. But only the other hand some of them can use feedburner.

Garratt

Personally I don’t bother with RSS as most users don’t use it. Instead supply a newsletter feed. It does the same trick + you get emails to market to (if done correctly). Majority of people are more likely to subscribe to a blog rather than bookmark a RSS in my experience. So it’s better to turn off RSS. You can do this using WordPress SEO by Yoast, and various other plugins.

Then if you also implement above mentioned strategies, you should be good. Remove all unnecessary headers RSD WLM etc.

There will be a couple still able to scrape effectively but those tricks will diminish a great deal of them.